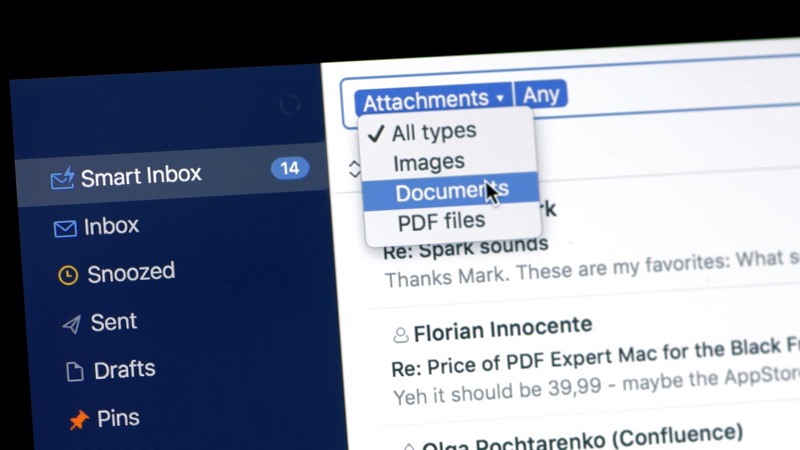

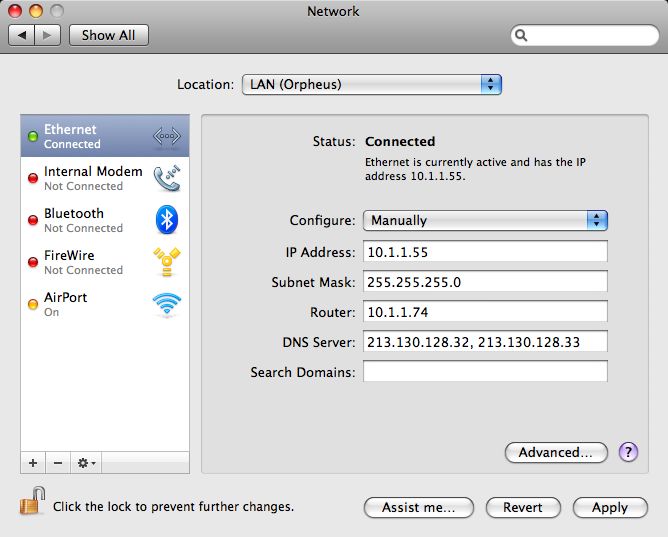

You should be able to see HiveServer2 web portal if the service is up. Ask the administrator if access to Spark (as the third-party email application) or Google (Spark uses its servers) is allowed. Some organizations enable a firewall to prevent access to certain websites from their networks. Make sure HiveServer2 service is running before starting this command. Fill out the fields, check advanced settings (ports, protection, etc.), and click Add. If you’ve configured Hive in WSL, follow the steps below to enable Hive support in Spark.Ĭopy the Hadoop core-site.xml and hdfs-site.xml and Hive hive-site.xml configuration files into Spark configuration folder: cp $HADOOP_HOME/etc/hadoop/core-site.xml $SPARK_HOME/conf/Ĭp $HADOOP_HOME/etc/hadoop/hdfs-site.xml $SPARK_HOME/conf/Ĭp $HIVE_HOME/conf/hive-site.xml $SPARK_HOME/conf/Īnd then you can run Spark with Hive support (enableHiveSupport function): from pyspark.sql import SparkSession Refer to Fix - ERROR SparkUI: Failed to bind SparkUI for more details. The port number can change if the default port is used. As printed out in the interactive session window, Spark context Web UI available at The URL is based on the Spark default configurations. When a Spark session is running, you can view the details through UI portal. In this website, I’ve provided many Spark examples. Run Spark Pi example via the following command: run-example SparkPi 10 Fill out the new settings here and tap Log In. Enter your email and password and choose Open Advanced Settings. The interface looks like the following screenshot:īy default, Spark master is set as local in the shell. Open Settings > Mail Accounts > Add Account > Set Up Account Manually. Run the following command to start Spark shell: spark-shell

Now let's do some verifications to ensure it is working. You can configure these two items accordingly. The first configuration is used to write event logs when Spark application runs while the second directory is used by the historical server to read event logs. These two configurations can be the same or different. There are many other configurations you can do. # Enable the following one if you have Hive installed. Make sure you add the following line: localhost Run the following command to create a Spark default config file: cp $SPARK_HOME/conf/ $SPARK_HOME/conf/nfĮdit the file to add some configurations use the following commands: vi $SPARK_HOME/conf/nf If you also have Hive installed, change SPARK_DIST_CLASSPATH to: export SPARK_DIST_CLASSPATH=$(hadoop classpath):$HIVE_HOME/lib/* Load the updated file using the following command: # Source the modified file to make it effective: # Configure Spark to use Hadoop classpathĮxport SPARK_DIST_CLASSPATH=$(hadoop classpath) bashrc file: vi ~/.bashrcĪdd the following lines to the end of the file: export SPARK_HOME=~/hadoop/spark-3.0.1 We also need to configure Spark environment variable SPARK_DIST_CLASSPATH to use Hadoop Java class path. Setup SPARK_HOME environment variables and also add the bin subfolder into PATH variable. The Spark binaries are unzipped to folder ~/hadoop/spark-3.0.1. Unpack the package using the following command: mkdir ~/hadoop/spark-3.0.1 In my system, the file is saved to this folder: ~/Downloads/spark-3.0.1-bin-hadoop3.2.tgz.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed